Zero-Trust and Need to Know Predominate

The Central Intelligence Agency is embracing the zero-trust concept for its own digital security, and is looking to industry to help it solve near-future challenges by expanding efforts to automate elements of incident response.

In a wide-ranging keynote address at DSEI on 15 September, the US foreign intelligence service’s Chief Information Officer, Juliane J Gallina, characterised the ‘moat-and-castle’ approach to cybersecurity – with air-gapped systems separated from networks – as ineffective and counter-productive. Instead, the CIA has moved away from a mindset of preventing intrusion to one in which staff “assume our perimeter protections don’t work at all.”

The zero-trust security model – articulated publicly for the first time in a 2014 Google briefing paper – requires network users to constantly verify their identities and permissions. Gallina argued that this is the only sensible approach for any collaborative and networked enterprise to adopt, and that, while it may appear counterintuitive, is entirely compatible with the Agency’s culture and ethos. “We have to assume that our perimeter doesn’t even exist, that we can no longer trust anyone,” she explained. “Our gift shop has t-shirts that say ‘trust no one’ – of course this is our culture. And we really mean it on the inside as well. Every digital entity, whether it’s a person or a non-person, must prove continuously, as if it’s the first time they’re connected from the worldwide internet. We verify explicitly; we only provide the least-privileged access at all times – just what you need, because you need to know.”

Gallina, whose background includes extensive experience in the space industry, contrasted the zero-trust approach with the mantra associated with astronaut and NASA mission controller Gene Kranz, whose autobiography was titled Failure Is Not An Option. This is clearly the case for one-of-a-kind spacecraft on a launch pad, or when human life may be at risk while in orbit; but in terms of network and data protection, the definition of ‘failure’ has to be re-examined. Failure to prevent intrusion may be inevitable, but failure to protect data remains unacceptable. “We assume breach,” she confessed. “Yes, indeed, we assume failure is an option. ‘Failure Is Not An Option’ doesn’t mean there won’t be bad outcomes – it means that you have to judiciously address the risks, work together, lay out your options and take [the] next steps.”

Meanwhile, the Agency is eyeing a future in which human-machine teaming will help it stay ahead of digital adversaries. In the main, these technologies will help make sense of the increasingly vast amount of data made available by ever-more-capable sensors. “There are plenty of sensors,” Gallina continued. “Storage, networks, bandwidth – all of this is becoming cheaper and easier. But we have the secondary problem of trying to make sense of all that data. How do you get around it? You have to team with machines, and this is where the idea of artificial intelligence [AI] comes in […] My challenge to industry is to help us embed AI in every part of the cyber terrain […] The physical and logical elements that enable essential warfighting functions help us map the terrain, but that’s just the beginning of situational awareness. We need human-machine teaming throughout the spectrum, ultimately to help us with course-of-action recommendations, and I’m really challenging all of our industry partners to think about how to inject machine learning into every part of that analytic food chain.”

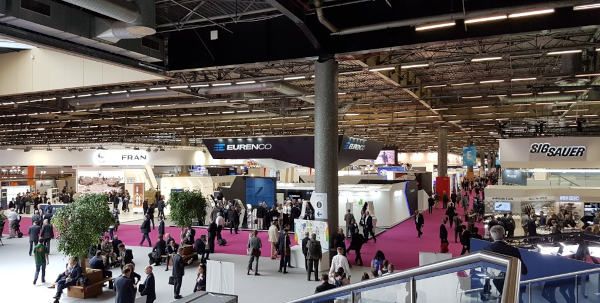

Angus Batey reporting from DSEI 2021 for MON